How to make sense of 48 interviews about loneliness1?

- On the one had we'd like to go beyond mere listing of counts of themes or tags to identify and present the stories themselves.

- On the other hand, we'd be pleased if the results were more than merely one researcher's response to the material and could perhaps even be to an extent reproduced, such that we'd get somewhat similar results when applying similar methods.

Those two bullet points are already, of course, dancing around a whole firestorm of argument around research methods, positionality, validity, having skin in the game, and so on.

But bear with me: I want to cut to the chase and suggest causal mapping is a great way to square this circle.

We applied causal mapping in a standardised way to these 48 interviews. The coding procedure is automated with AI but we primarily use it as a low-level assistant to do reproducible and transparent standardised coding, rather than as a "black box".

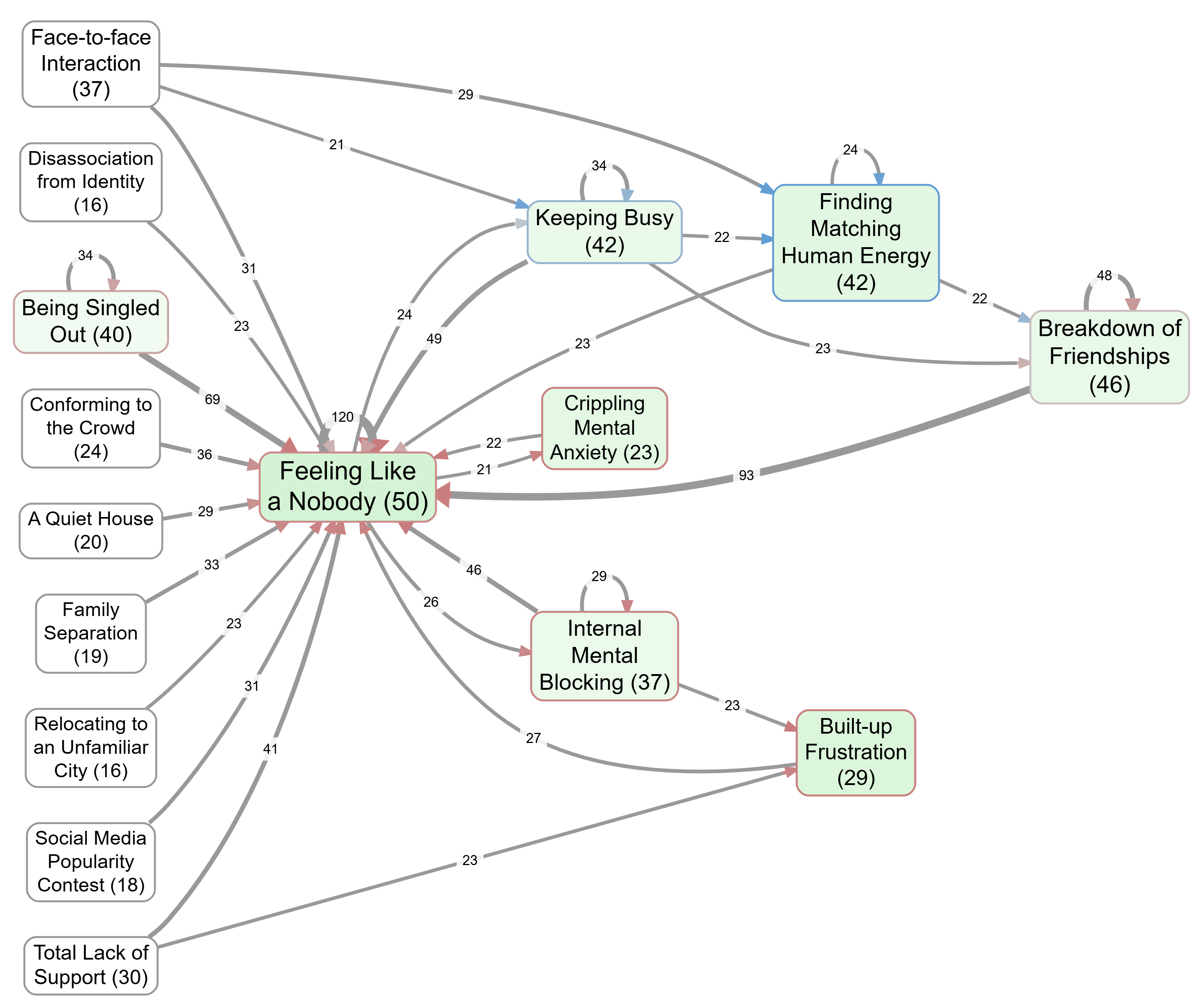

The result of this process, which took about 20 minutes, is a fully coded knowledge graph of 3392 identified causal claims, which can be interrogated in many different ways. Here is a top-level map. Having read some of the interviews (I know, right?) I'd say it's a pretty good summary of what these young Londoners were saying. Fatter links and boxes were mentioned more often; blue arrows and factors are judged to be associated with more positive sentiment.

It's only "human in the loop" in the sense that humans set up the procedure and kept an eye on the results and made a few tweaks here and there. But neither do we succumb to giving the AI much freedom to simply "identify the themes" or "identify the themes". So if neither human nor AI was doing any very deep sensemaking, where does the story which the map tells actually come from?

Could it be that there is something like, in overview, a "truth" about what the interviewees were saying?

The (non-AI) algorithms behind the analyses are relatively elaborate and very deliberate, but they are transparent and reproducible. Their claim to be a valid way to synthesise and present causal links is checkable and can be challenged.

So, what do you think? Can we identify and present the stories in narrative data without having humans or LLMs in the loop?

This analysis is related to one I recently presented at the Agents4qual conference: Lonely in London. It presented a "qualitative" paper which was entirely written by AI, mimicking traditional, iterative methods of identifying themes.

You can view and interrogate the causal map above, here.

-

Fardghassemi, S., & Joffe, H. (2022). Qualitative and output data on loneliness among young adults (p. 15430034 Bytes) [Dataset]. University College London. https://doi.org/10.5522/04/17212991 ↩